Many IT teams today are falling into a dangerous “Hybrid Trap”: they manually build a target architecture and then ask AI to simply “smooth the edges” of the migrated code. In this article, we explain why those “edges” are actually where your most critical business logic is hidden—and why general-purpose AI is statistically unlikely to see it.

The Illusion of Progress in Legacy Environments

The trend is undeniably on the rise, and the promise of Generative AI in software development is massive. We are currently overwhelmed with news of Large Language Models (LLMs) reaching “inhuman” results in coding competitions and solving complex development tasks with ease. Benchmarks such as SWE-bench show constant, impressive progress in AI’s ability to handle modern codebases.

However, for IT directors managing mission-critical systems, a vital question remains: Does this brilliance maintain itself in actual business conditions, especially when dealing with legacy stacks?

THE DATA DISPARITY: The “1000x” Training Problem

Most successful AI systems are trained on extensive libraries of coding examples across multiple technologies. This approach requires a very solid set of examples and carefully crafted, verifiable tasks to utilize reinforcement learning.

For modern technologies like Java or Python, this data is infinite. For legacy technologies, the reality is starkly different. There are no coding competitions for legacy systems, and the majority of available material is focused on comparing legacy systems to modern ones rather than providing pure coding patterns.

The numbers from the Software Heritage dataset speak for themselves:

| Language | Num Files |

| JavaScript | 66.9 Million files |

| Java | 62.2 Million files |

| SQL | 4.5 Million files |

| PL/SQL | 111,327 files |

| Oracle Forms | 0 files |

Legacy technologies often have 1000 times less training data than modern software stacks. Even PL/SQL is just a small fraction of the SQL subset, making it significantly less prominent in LLM pretraining.

THE “BINARY TRAP”: Why AI Can’t See Oracle Forms

One of the biggest obstacles for AI in this space is how Oracle Forms code is stored. Most of it is kept as compiled binaries (.fmb files). All publicly known coding datasets explicitly discard binary files when harvesting data from repositories.

Because the “brain” of the AI has never been fed the actual structural logic of these binary files, it struggles on a basic level:

- Syntax Failure: AI frequently messes up the syntax for “exotic” or older languages.

- Architectural Blindness: It lacks architectural insight and authoritative sources to understand how these systems were built 30-40 years ago.

- Knowledge Gaps: Even if reference documentation is available, AI cannot build its knowledge based purely on a manual without the context of massive training examples.

Long-Term Planning vs. “Smoothing the Edges”

Modernization is not a single task; it is a structured plan with thousands of steps. Legacy 4GL technologies often enforce their own patterns and practices that are very difficult to represent in today’s systems without actually mimicking the original design.

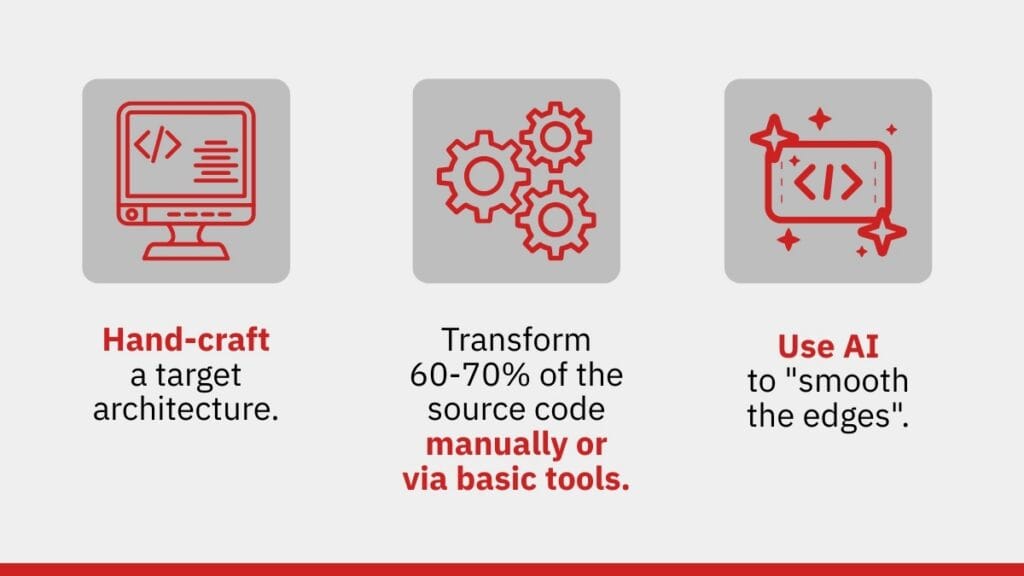

Currently, AI systems are incapable of executing these long-term structured plans. This has led many teams to adopt a dangerous “Hybrid Approach”:

This is the trap. Those “edges” are exactly where the actual business rules, constraints, and critical operational semantics are hidden. Systems modernized this way may look good “on the surface,” but under the hood, they introduce tricky errors and inconsistent logic that destroy confidence and quality.

The ReForms21 Perspective: Engineering over Hallucination

At ReForms21, we recognize that AI for 100% automated code conversion in legacy environments is still in the “promise” section. To modernize a large-scale system safely, you cannot rely on a model that guesses syntax.

We bridge these gaps through Structural Refactoring and Knowledge Extraction. Instead of asking an AI to guess what a PL/SQL trigger does, we build an actual technical and operational model of the whole system. We iteratively construct a reference, high-confidence map of major business processes based on actual evidence, not probabilistic guesses.

AI is a powerful tool for supporting tasks, but when it comes to the core logic of your enterprise, you need the certainty of a structural engineering approach.

THE PATH FORWARD: From Strategy to Precision

Recognizing the “Scarcity Gap” is the first step toward a successful modernization strategy. While general-purpose AI is a powerful assistant for “smoothing the edges” of modern code, it lacks the architectural depth to handle the weight of 30 years of mission-critical business rules hidden within your legacy monolith.

To move your project from strategic consideration to engineering certainty, you need to identify exactly what is “invisible” to standard AI models within your specific environment. You need to know if your system is built on standard patterns or if it is riddled with the “technical bombs” that probabilistic models fail to detect.

Coming up next: In our follow-up article, “Oracle Forms Migration. From Theory to Practice: Assessing Your System’s AI-Readiness,” we move beyond the high-level risks and into the trenches. We will share our proprietary Diagnostic Checklist—a technical tool designed to help you audit your environment for “hidden business rules,” from Three-Valued Logic traps to binary communication dependencies.

Don’t miss the practical guide that determines if your system is a candidate for simple AI assistance or if it requires the surgical precision of structural refactoring.